I don't care what the lesser bits are doing they have to be made for the most significant to make.

Are ALL the lesser bits always on? That is, is your sequence value always 1,3,7,15,31....

If so, then adding 1 makes it 2,4,8,16,32.... A single bit is set that is the 2^step number not met.

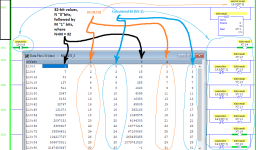

While doing the Log(x)/Log(2) trick will work, a more "efficient" method would be to use the following code (all tags are DInts. StepBitwise is your bit-set sequence word):

CLR StepNo.

ADD (StepBitwise, 1, StepBit_SingleBit)

AND (Step_SingleBit , #32:aaaa_aaaa , Temp) NEQ ( Temp, 0) ADD (StepNo, 1, StepNo);

AND (Step_SingleBit , #32:cccc_cccc , Temp) NEQ ( Temp, 0) ADD (StepNo, 2, StepNo);

AND (Step_SingleBit , #32:0f0f_0f0f , Temp) NEQ ( Temp, 0) ADD (StepNo, 4, StepNo);

AND (Step_SingleBit , #32:ff00_ff00 , Temp) NEQ ( Temp, 0) ADD (StepNo, 8, StepNo);

AND (Step_SingleBit , #32:ffff_0000 , Temp) NEQ ( Temp, 0) ADD (StepNo, 16, StepNo);

At this point, StepNo contains the value ( 1 , 2, 3, 4, 5 ...) of the step that is waiting for its bit to be set. To get the number of the step that is complete, subtract 1.

Keep in mind that this technique only works if StepBit_SingleBit has one and only one bit set. Your description of how you code your sequencer makes that likely, but if process changes have caused you to skip, say, step 3, and thus the 3 bit is never set, this will not work.

I agree that Ron's method is probably better, more bullet proof. But this code should make Peter at least a smidge happier. Some of us don't forget his tips and twiddles.