Welcome to the forum!

I agree with the previous responses, but here are my two cents:

Analyzing the OP program

What the OP program

tries to do is sample data at 1s intervals and totalize the flow at each 1s (=100cs) interval:

- (FLOW_LPM/60) is Volume/s

- (Volume/min) / (s/min) => Volume/s

- "*1" is integrating an assumed constant volume flow rate over 1s to calculate a Volume

- (Volume/s) * 1s => Volume

- "+ FLOW_TOT" totalizes the flow

- Presumably the OP program is also showing the total after 60s, perhaps with another timer that is not shown

So the math is correct, but the program has an assumption that the sampling occurs at 1s intervals, and that assumption is questionable at best.

What the OP program

actually does, is the same math, but at an effective average interval of around 1.36s (136cs; i.e. 60/44). This is essentially what the other responses are saying, and their solution is to make the actual interval closer to the 1s implied by the "*1" term of the formula.

SidebarN.B. 1.36s (136cs i.e. 36% error average, so maybe 72% max per step, 720ms max on one scan?) seems a bit long; I would expect the timing accuracy to be closer to 1-2% i.e. average lost time is 10-20ms per totalizing step; certainly no more than 5% (100ms time lost max on any one scan, so ~50ms expected average). So an alternative explanation is that calculations are all being done with integers and there is truncation going on e.g. FLOW_LPM is 59 and 59/60 is 0 for ~25% of the totalizing steps.

One way to verify that the average totalizing step is 136cs is to count the number of totalizing steps per minute. If it around 44, then timing is the problem; if it is 49-50 or more, then there is an additional source of inaccuracy.

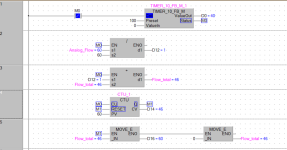

Fix the timing

As suggested in the previous responses, if the program keeps the existing formula, with its assumption of 1s (100cs) sampling intervals, then the solution must be to make reality conform to the assumption. @parky suggested using an interrupt routine, which should work. Another approach would be to change the timer counter target ensure 60 samples occur per minute. At present it seems to be 44 samples per minute with a target of 100; that suggests changing the scan interval

target from its current value of 100 to around 70-75 would be expected to yield the desired 1s sample rate and 60 samples per minute. That calibration could be made and might be an interesting experiment, but in practice it would need to be checked regularly, especially if the program ever changed.

Fix the timing calculation

Another way to fix the timing issue would be to drop the assumption that the sample interval is 100cs. To do this, modify the formula to weight each sample by its actual interval duration (integration duration), i.e. use

- "* (PULSE_CURRENT/100.0)" i.e. (cs / (cs/s)) => s

- instead of

- "*1"

That could still have some error, but is should be less, if timing is indeed the entire issue.

Ignore timing interval

Another approach would be to recognize that the totalization formula is currently a weighted average, and instead of trying to weight the sample by the duration of the sample, simply sum the samples but also count the number of samples at each sample time:

FLOW_TOT <= FLOW_LPM + FLOW_TOT

SAMPLE_COUNT <= 1 + SAMPLE_COUNT

then, at the end of one minute, perform the average:

FLOW_TOT <= FLOW_TOT / SAMPLE_COUNT

This takes a different approach from that intended by the original program. But in a Gaussian-noise world and 40-60 samples per minute, I doubt it has any less validity than the timing interval corrections above. Because even if we can accurately correct (or measure) the actual sample interval, we are still using a single FLOW_LPM sample on one scan,

along with its (Gaussian?) noise, on that one scan to represent the average flow over that entire sample interval. So whether we take 60 weighted samples (correct the sampling interval to 100cs), or 44 weighted samples (measure the sampling intervals), or 44 non-weighted samples over the minute (average however many samples we get), the expected errors and accuracy will be about the same.

P.S. there is no need to apologize for your english language skills; I am sure they are infinitely better than my skills in your first language.