Hey guys,

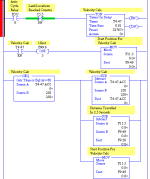

I am trying to calculate the linear speed of a machine. The machine runs off of an encoder so I am using a looping timer to grab snapshots of the encoder every few seconds. Then I calculate the speed from distance/time.

Is there any better way of doing this? The resulting speed varies somewhat. I am not sure if it is a flaw in the programming, or if the process is actually varying. I have no other way of measuring the linear speed accurately.

Can someone take a look at the attached program and maybe offer suggestions?

John

I am trying to calculate the linear speed of a machine. The machine runs off of an encoder so I am using a looping timer to grab snapshots of the encoder every few seconds. Then I calculate the speed from distance/time.

Is there any better way of doing this? The resulting speed varies somewhat. I am not sure if it is a flaw in the programming, or if the process is actually varying. I have no other way of measuring the linear speed accurately.

Can someone take a look at the attached program and maybe offer suggestions?

John