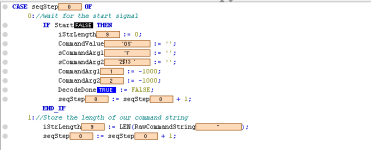

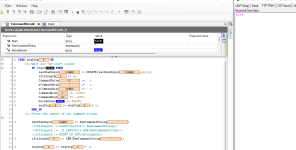

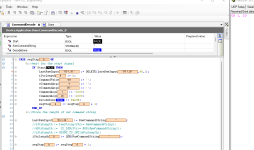

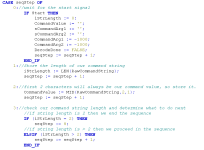

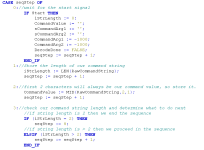

Just wanted to see if anyone can see anything wrong with this as my mind is being blown. Basically, I have created a TCP server string decoder to break down the received string into smaller pieces which I can then use.

for whatever reason I'm finding if I send a 8-character string it always shows as 9. I just tested and found that if I send a 7-char string it also shows as 9. If I send a 2-character string, then it just shows 2 characters as well, which is additionally confusing. Length seems to be correct up to 3 characters, when I add a 4th, it jumps up to 5.

not a huge deal, I have found that when I convert the various strings into int's it truncates the mystery characters, but I'm more aggravated about the why. I expect it's a matter of ignorance on my part.

Thanks

for whatever reason I'm finding if I send a 8-character string it always shows as 9. I just tested and found that if I send a 7-char string it also shows as 9. If I send a 2-character string, then it just shows 2 characters as well, which is additionally confusing. Length seems to be correct up to 3 characters, when I add a 4th, it jumps up to 5.

not a huge deal, I have found that when I convert the various strings into int's it truncates the mystery characters, but I'm more aggravated about the why. I expect it's a matter of ignorance on my part.

Thanks

Last edited: