mtech2766 said:

If I set the the max input to 32767 (giving me 10 volt max input), can I then set the max output to 10 and it read accurately at 2.15 and 7.5?

...

And my input min would need to be set to 229 to compensate for the .07 Vdc offset?

I don't think so, see the TL;DR below.

The SCP

Input Max. parameter should be 10000 for 10VDC analog voltage signal, and the

Input Min. parameter should be 70 for a 0.07VDC analog voltage signal (=70mVDC at 1mVDC per count), or perhaps 0 for a 0VDC analog voltage signal.

Because of ambiguity in the previous posts, we cannot be completely sure of what the

Scaled Min. and

Scaled Max. values should be, but from the OP, I will assume what is wanted is for the output of the SCP instruction to be

- 7.5 for an analog voltage signal of +9.5VDC

- 0.0 for an analog voltage signal of 0VDC,

- Or this may be 0.0 for an analog voltage signal of 0.07VDC

We don't know the units of those 7.5 and 0.0 SCP otuput values; "ADC" was mentioned. I am guessing that is DC Amps, but it does not matter: a key assumption is that the current-voltage relationship of the current sensing device is linear, and that the voltage-count relationship of the PLC analog input card is also linear.

One way to do this would be to configure the SCP as follows:

- The digital output INT of the analog input card channel as the SCP Input parameter

- 0 (or 70) as the SCP Input Min. parameter

- 0 will be the channel unput value when the analog input voltage signal is 0VDC

- 70 will be the channel input value when the analog input voltage signal is 0.07VDC = 70mVDC

- 0.0 as the SCP Scaled Min. parameter

- 9500 as the SCP Input Max. parameter

- 9500 will be the channel input value when the analog input voltage signal is 9.5VDC

- 7.5 as the SCP Scaled Max. parameter

The only problem with this approach is that the SCP instruction would clamp the output at 7.5 max even if the analog input voltage signal went above 9.5VDC, so the operator might see a

clamped 7.5 output when the input voltage signal was above 9.5VDC and the actual value was above 7.5. One way to fix this would be to use arbitrarily high values for

Input Max. and

Scaled Max. parameters, but values that both (1) express the relationship correctly in a numerical sense, and (2) are easy to calculate, e.g.

- 15.0 as the SCP Scaled Max. parameter

- This is twice the 7.5 nominal scaled max

- 19000 as the SCP Input Max. parameter

- This is twice the 9500 nominal input max.

- However, if 70 is used for Input Min., corresponding to the Scaled Min. of 0.0, then use

- 18930 as the SCP Input Max. parameter

TL;DR

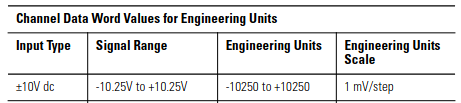

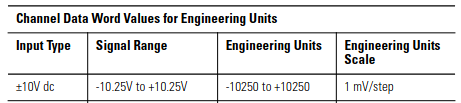

According to previous posts, the card is set for [Engineering Units] (±10250), not [Proportional Counts] (±32k). It depends on the following assumptions

- The analog input card is the 1746-NI16V that @jimtech67 suggested (we still don't know this, so this is the first shaky assumption)

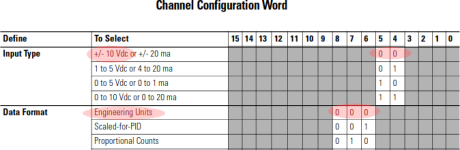

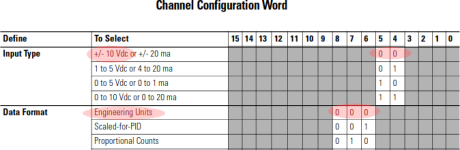

- IF the analog input channel configuration word is 0 (i.e. the default; this is the second shaky assumption):

-

mtech2766 said:

I am using engineering units input for -10 to +10 Vdc. (I was told it is best to not change the default setting in case the Ain module needs replaced).

- So this is the configuration:

- Bits 5-4 are 00, so

- the analog input voltage signal [Input Type] is ±10VDC (nominal)

- Bits 8-6 are 000, so

- the [Data Format] is Engineering Units,

- the analog voltage [Signal Range] is ±10.250VDC,

- the analog input digital INT range will be -10250 to +10250

- I.e. the analog input digital value will be

- -10250 counts when the analog voltage signal is -10.25VDC

- +10250 counts when the analog voltage signal is +10.25VDC

- 0 counts when the analog voltage signal is 0VDC

- 70 counts when the analog voltage signal is 0.07VDC

- i.e. when the analog voltage signal is 70mVDC above 0VDC, the input digital value is 70 counts, = 70mVDC / (1mVDC per count step), above 0 counts.

- Cf.