The sensor needs to be within the limits of the input card.a good calibration of the sensor is needed to be able to control the sensor,

same for the input card.

together you will need to see both conversions and make a recalculation like a map instruction, or a scale .

even a perfect calibrated sensor and a calibrated input card will need a scale function.

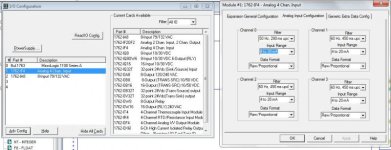

Not so, the scaling is (can be) done on the input module itself, and you can use these scaling parameters to "adjust" a badly calibrated sensor AND a badly calibrated analog input module.

"proper" calibration of the sensor could be expensive, but providing it gives a proportional signal that is within the operating range of the analog input module, would be ok.

"proper" calibration of the analog input module requires taking the system down while calibration is performed (program mode on the PLC), so may not be an option.

"Calibration", of both the sensor and the input module is just giving them both parameters to adjust the signal conversions for gain and zero offset, so it really doesn't matter if everything is "calibrated", or not, you can just adjust end-to-end using the on-board scaling of the input module.

Of course, this does mean you have to "re-calibrate" the on-board scaling if you have to change the input sensor, or the analog input module. And because you can write to the channel scaling parameters, many times these can be updated automatically in PLC code, especially the "zero" end.

In over 30 years working with all platforms of A-B analog input and output modules, I have never had the need to "calibrate" any of them, in fact I don't think calibration existed pre- Logix5000.

It is never an "ideal" scenario scaling end-to-end, but sometimes it is the cheapest and quickest way to make an analog channel read the real-world process variable adequately.